Clawdbot (Moltbot) Explained

How This “Personal AI Agent” Actually Works?

Giving an AI full control over your computer sounds powerful. It also sounds reckless.

Clawdbot, now rebranded as (Moltbot or OpenClaw), sits right at that intersection. Viral demos make it look like Jarvis finally landed.

Reddit threads call it a supply-chain attack waiting to happen.

So let’s strip away hype and backlash.

Here’s what Clawdbot actually is, how it works under the hood, where it genuinely creates leverage, and where the risks become non-trivial.

What Clawdbot Actually is?

Clawdbot is an open-source, local-first AI agent that runs on your own machine and executes real system-level actions through messaging apps.

Unlike a typical chatbot, this is not just text-in, text-out. It runs continuously on hardware you control, often a Mac mini or home server, and connects to messaging platforms like Telegram, Discord, WhatsApp, Signal, or iMessage.

You talk to it like you’d message a colleague. It responds and, more importantly, acts.

It plugs into commercial LLM APIs such as Claude or OpenAI models. The reasoning happens in the cloud. The execution happens on your machine.

Key characteristics:

Stores long-term memory locally (often in Markdown files).

Can read and write files.

Can execute shell commands.

Can automate browser sessions.

Can connect to email and calendar systems.

Originally called “Clawdbot,” it was rebranded to Moltbot/OpenClaw after trademark pressure from Anthropic.

That naming drama later fed into broader trust concerns, but more on that later.

The important distinction: this is an agent, not a chatbot. A chatbot talks. An agent does.

How does the Clawdbot architecture work?

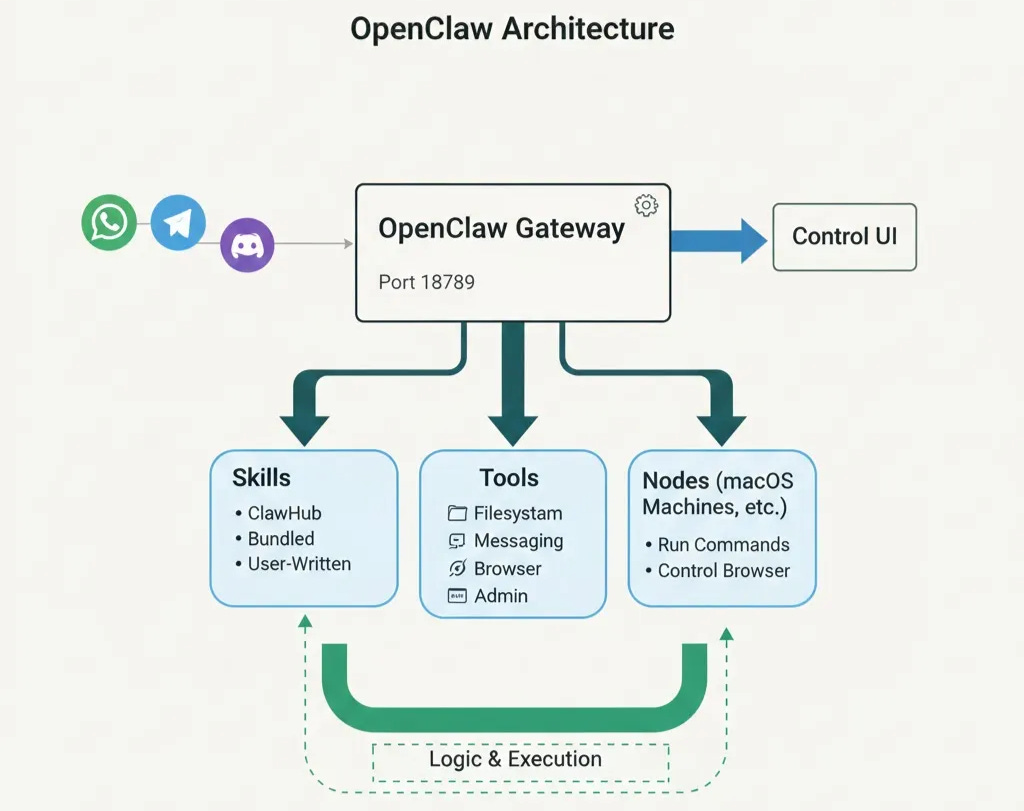

Clawdbot is a message-driven orchestration loop layered over LLM reasoning and tool execution.

There’s no magic. The architecture is surprisingly straightforward.

1. Messaging gateway layer

You send a message through Telegram, Discord, WhatsApp, slack or another platform. That message is captured via bot tokens and passed into the agent system.

This is why setup often feels like DevOps work; you have to configure APIs, intents, permissions, and tokens correctly.

2. LLM reasoning layer

The message is forwarded to a model (Claude, OpenAI, etc.) via API. The LLM analyzes intent and decides which “tool” to use.

For example:

“Check if my server is up.”

“Clean up my inbox.”

“Book the cheapest flight.”

The LLM outputs structured instructions describing which action to take.

3. Tool execution layer

This is where the real power and danger live.

Clawdbot can:

Launch browser sessions.

Fill web forms.

Execute shell commands.

Modify files.

Query APIs.

Send emails.

Access calendars.

Once executed, the results are returned to the model and then back to you via messaging.

4. Memory persistence

Conversation history and preferences are often stored locally as structured files. This creates long-term continuity.

Some users experiment with local LLMs via Ollama. But many report that tool-use workflows break unless models have large context windows (16k+ tokens). Configuration complexity rises quickly.

From an enterprise architecture perspective, this is a lightweight orchestration engine with local execution privileges.

When we design AI agents at Troniex Technologies, we use similar layered orchestration patterns, but we isolate execution environments and enforce policy constraints to reduce blast radius.

Clawdbot, by contrast, assumes you are your own security team.

Why Users Say It Feels Like “Jarvis”

Proactive messaging plus full-system access makes it feel like an AI employee.

What differentiates Clawdbot from ChatGPT is not intelligence, but behavior.

It messages you first.

Users configure:

Morning briefings.

Server downtime alerts.

Calendar reminders.

Task nudges.

Instead of pulling up a browser tab, the agent pushes information to you.

Common use cases reported:

Inbox triage across hundreds of emails.

Automated airline check-ins.

Monitoring servers and services 24/7.

Filling repetitive web forms.

Drafting content based on social media trends.

Negotiating purchases while the user sleeps.

Chat with your website visitors via Telegram/WhatsApp and collect details of the prospect, and filter the leads.

The messaging-first UX is powerful. It feels persistent. It feels alive.

But here’s the key difference between novelty and leverage:

If the workflow runs end-to-end without supervision and saves real time, it’s a leverage.

If it just replaces clicking two buttons manually, it’s over-engineered.

Where the Security Model Becomes High-Risk

An internet-connected AI agent with system-level access dramatically increases your blast radius.

Let’s be explicit.

Clawdbot can:

Read your files.

Execute shell commands.

Access email.

Access browser sessions.

Potentially access SSH keys and crypto wallets.

That means a compromised dependency or malicious prompt injection could exfiltrate sensitive data.

Reddit critics often describe this as a “supply-chain attack dream.” They’re not wrong.

Major risks include:

Prompt injection

A malicious web page could instruct the LLM to retrieve sensitive data from your system and send it elsewhere.

Dependency compromise

Open-source projects depend on many packages. A compromised dependency could gain system-level access.

Social engineering

An attacker could manipulate the LLM into executing harmful actions.

Open source does not eliminate runtime risk. Inspectable code does not equal safe execution.

Mitigation strategies if you insist on running it:

Use a dedicated machine. (Using an isolated VM Environment is suggested.)

Do not store crypto wallets or SSH keys on that machine.

Restrict file system permissions.

Segment network access.

Avoid running with unnecessary elevated privileges.

In enterprise environments, we never deploy AI agents with unrestricted root access.

We isolate execution layers, enforce policy engines, and maintain audit logging.

That maturity layer is absent in most personal agent setups today.

Setup Complexity and Operational Friction

Clawdbot behaves like infrastructure tooling, not consumer software.

Installation often involves:

Node and PNPM setup.

Environment variable configuration.

Messaging bot token generation.

Discord message content intents.

Port management.

Gateway restarts.

Common issues reported:

“Port already in use.”

Non-responsive bot instances.

Misconfigured state directories.

Inconsistent model behavior.

The gap between viral demo and first successful deployment is wide.

This is not plug-and-play. It’s closer to self-hosted DevOps infrastructure.

Cost Structure and Token Economics

The software is free. The models are not.

Clawdbot depends on API calls to commercial LLMs. That means recurring costs.

Reported usage patterns:

$3–5 per day for moderate hobby use.

Heavy automation potentially approaching $300 per month.

Some users express frustration that a Claude Max subscription cannot fully substitute for API access. That creates a “double pay” perception.

Another complexity: model escalation. The agent may switch to more powerful models mid-workflow, increasing token costs unpredictably.

Local models can reduce API spend, but stability and tool-use performance often degrade.

From a business standpoint, recurring automation costs must correlate with measurable output. At Troniex Technologies, when we implement AI automation systems, we quantify time saved, error reduction, and revenue impact before scaling usage.

If you cannot measure value, costs will feel painful.

Overkill vs Genuine Automation Leverage

Capability alone does not justify deployment.

Overkill scenarios:

Sending basic reminders.

Fetching weather updates.

Simple web lookups.

Smartphones already do these cheaply and safely.

High-leverage scenarios:

Multi-step workflows across systems.

Recurring operational tasks.

Revenue-linked automations.

High-frequency repetitive processes.

A simple evaluation checklist:

How often does this task occur?

How much time does it save?

What is the failure risk?

What is the monthly token cost?

Does it impact revenue or operations?

If you cannot answer these clearly, you’re probably experimenting, not optimizing.

Branding Controversy and Trust Implications

The rebrand from Clawdbot to Moltbot/OpenClaw occurred after trademark pressure from Anthropic.

During the transition:

GitHub and social handles were reportedly hijacked.

A fake “ClawdBot” token launched on Solana.

It briefly reached significant market capitalization before crashing.

Even if unrelated to the core project, the episode raised governance concerns.

If branding and handles can be hijacked easily, some users question whether root-level trust is wise.

Trust in infrastructure systems depends on operational maturity, not just technical novelty.

Who Should Actually Run Clawdbot?

This is for technically mature operators comfortable managing risk.

Ideal users:

Developers.

Automation-heavy founders.

Infrastructure enthusiasts.

Indie hackers who understand threat models.

Not ideal:

Non-technical users.

People are storing sensitive financial assets on the same machine.

Organizations lacking policy controls.

If deployed, start with:

A dedicated machine.

Minimal permissions.

No wallet or key storage.

Telegram-only integration.

Clear monitoring and logs.

For organizations, deploying AI agents with system-level access requires sandboxing, policy enforcement, and audit trails. This is where structured AI execution layers, like those we design at Troniex Technologies, become critical.

Personal experimentation and enterprise deployment are not the same thing.

The Future of Personal AI Agents

The concept is real.

Proactive, tool-using agents represent a genuine UX shift. Messaging-first automation feels different from static web chat.

But current implementations are experimental.

Expect the next phase to include:

OS-level sandboxed agents.

Fine-grained permission systems.

Policy-constrained execution.

Enterprise-grade orchestration.

Transparent cost controls.

Clawdbot proves the idea works. It does not yet prove it is production-safe.

Treat it as experimental infrastructure.

If you are evaluating AI agents for business use, security architecture, execution isolation, and ROI modeling must come first. That is where mature AI automation frameworks differentiate from viral demos.

Final Takeaway

Clawdbot is not hype. But it is not mature infrastructure either.

It demonstrates what personal AI agents can become. It also exposes the risks of giving LLM-driven systems unrestricted system access.

The future of AI agents will belong to architectures that combine autonomy with governance.

Experiment carefully. Deploy strategically.

FAQ

What is Clawdbot?

Clawdbot is an open-source personal AI agent that runs locally and executes system-level actions via messaging platforms.

Is Moltbot the same as Clawdbot?

Yes. Moltbot/OpenClaw is the rebranded version of Clawdbot following trademark pressure.

Is Clawdbot safe to run?

It can be safe with strict isolation and limited permissions, but its architecture increases risk if misconfigured.

How much does Clawdbot cost?

The software is free, but API usage for LLMs can range from a few dollars per day to several hundred per month, depending on usage.

Can Clawdbot run local models?

Yes, via tools like Ollama. However, tool-use workflows often require large context windows and stable model behavior.

Is Clawdbot worth it?

It depends. For high-frequency, multi-step automation, it can create real leverage. For simple reminders or novelty tasks, it may be overkill.